Escaping the Artificial Hivemind: The problem with critiquing model outputs over fundamentally creating

How to maintain your human premium in an emerging AI slop landscape

The other day, I was ideating a story with Claude and asked it to generate a first draft. I didn’t think much about it until the next day, when I saw a Nano Banana photo of a woman wearing a conference badge. I squinted when I noticed there was a name on the badge, and that name was Maya Chen. Waaait a second… *pulls up Claude*

Maya Chen also happened to be the name of the character in my Claude story.

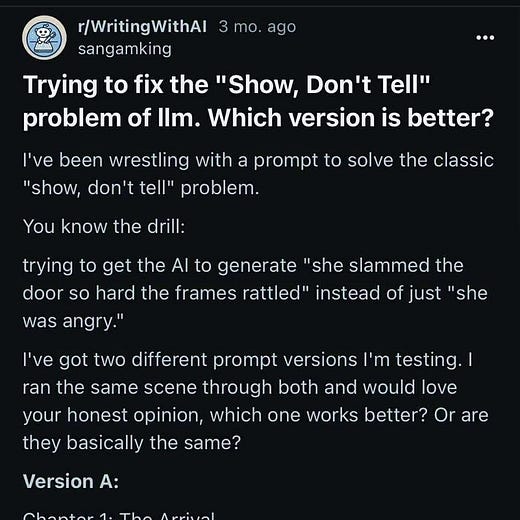

Puzzled, I went to ChatGPT and asked it to generate a list of names of characters for a story. Lo and behold, in the first try, Maya Chen was there, sprinkled innocuously among a list of other seemingly random names like Leo Ramirez and Ben Lawson.

She wasn’t a Johnny Appleseed or Harry Potter… who was Maya Chen and why are models from different labs all one-shotting her?

I learned this phenomenon is coined “inter-model homogeneity,” and it’s the exact focus of this year’s best paper at NeurIPS on the Artificial Hivemind. Inter-model homogeneity is the tendency for different language models to converge on ideas in their outputs even for open-ended tasks due to similar training data and post-training objectives, with the warning that “model-level convergence may propagate into human expression, amplifying uniformity in linguistic and cognitive patterns at scale.”

Maya Chen is just the name of a character, but imagine the more subtle ways this inter-model homogeneity might ripple into our thought patterns until our individual idiosyncratic distributions of language and experience are shaven away. As a techno-optimist and creator, I’ve reflected a lot on this. In this post, I present the present-day value shift from critiquing to creating, the problem with that shift for open-ended natural language tasks, and, with those in mind, how to think about preventing AI-assisted creations from degrading into slop.

Thought 1: There is an emerging value shift from critiquing to creating in the age of generative AI

Do you remember those in-class essay days in school? Everyone would come into the classroom, grab a stack of loose leaf paper from the front of the room, and have to turn in a hand-written essay by the end of the class period. It seems archaic now (hand-writing meant that if we wanted to add a sentence, we’d have to squeeze them into the margins with a floating carrot and cross our fingers the grader would forgive us) but it was a form of pure creation. From nothing into something. Critiquing was only the role of the grader, who reviewed our creative outputs (the essays) with an entirely different headspace: searching for flaws as opposed to trying to come up with an argument in the first place.

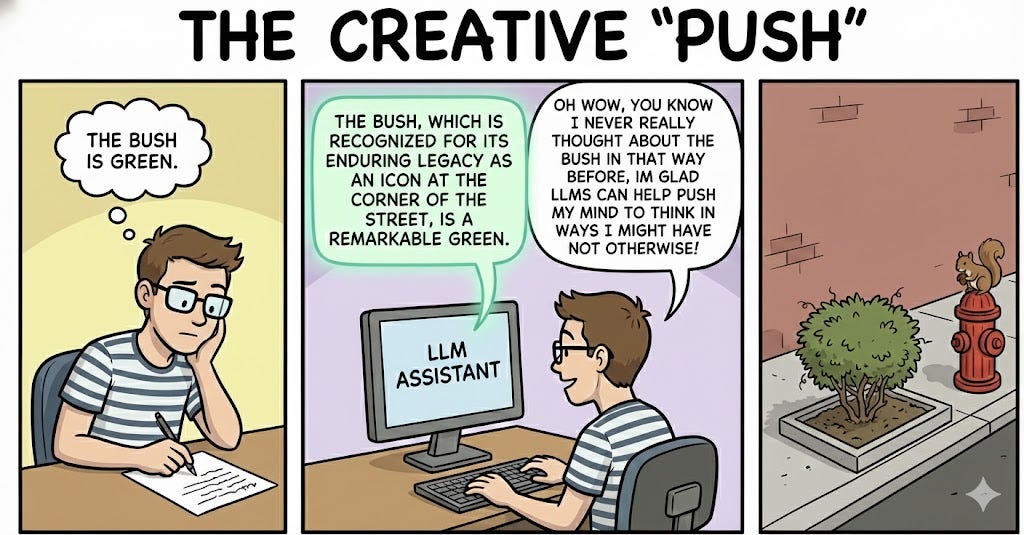

But now, we value something different when creating an original essay: critiquing. Ask a bunch of different LLMs to write an essay. Each essay on its own is terrible, but find a good sentence from this one, and another one from the other, and maybe with some wrangling (and another pass through the LLM) the final essay can turn out to be alright. You’re critiquing over creating, judging entirely formulated ideas as they come in their own packages, and the cost/effort of creating (getting each packaged idea) feels like zero. It is curating the parts that are of good ‘taste’ that ultimately leads to the downstream value. However, after doing this for some time (especially as a student who just wanted to get her assignments done), I realized

Thought 2: Something is lost when we only choose from model outputs instead of creating from scratch

There’s a ‘signs of AI writing’ list that aggregates unnatural ways LLMs tend to express ideas that a human probably wouldn’t have come up with on their own. For example, LLMs may hype up otherwise trivial things (using phrases like ‘enduring legacy’ to describe something like a bush). Though a human would not have proposed this form of flattering wording, when picking and choosing from model outputs, one might just read it and think, oh, well, it’s correct English and it kind of makes sense, I guess that bush really has an enduring legacy that I never really thought about before. And accept the sentence as their own.

Similar to arguments we’ve heard about how writing notes helps you remember them more than typing them on a laptop, passively reading and accepting LLM responses underactivates the brain compared to coming up with what to express in the first place. But this isn’t just about rejecting model outputs because it’s ‘good’ for your brain in the long run -- it’s about celebrating the unique distribution shaped by your particular lived experiences and recognizing when individuality can contribute more than the hivemind.

In the process of creating, not just critiquing model outputs, you have to pull from the distribution of everything you’ve experienced -- the corny talk show your parents watched, the observations you have in the corner of a party -- not the collective experience of the internet. And for open-ended tasks, there is value in the unpredictability born from lived experience. It’s nice to catch people off-guard and make them laugh or think.

Thought 3: Rejecting AI in the natural language-driven parts of the generative media creation lifecycle is what maintains the human premium and prevents the creation from degrading into slop

The generative media creation process is composed of numerous steps. There are some parts of this process where it makes sense to value critiquing over creating. For example, when you have 3 outputs from a video model and you have to choose which clip had the best ‘acting’ in it. But I believe that any part of the process that is driven by natural language should be created by a human to prevent the creation from turning into slop. Oftentimes a natural language seed fundamentally shapes the generative creation (for example, the script of an AI film or the lyrics to an AI song influences the final creation whether through prompt chaining or conditioned generation). The seed needs to be fruitful. Natural language is closest to the raw human thought running in our minds, with the highest information density compared to other mediums like videos.

You can imagine the process as a fill-in-the-blank adventure. Without a human driving the natural language influences, the final outcome is slop as the hivemind fills in the blanks with boring predictable directions. I don’t believe in full automation (like a one-click movie) as a result of this. I think a lot of the anger directed towards generative media is because of this reductionist one-click view. For example, a common argument against AI music is that the resulting audio has “no soul” because AI-generated lyrics are trite. But you don’t really hear arguments that AI music is bad because it can help put a melody and beat to words.

☼

I once went to an author’s talk where someone asked her how she uses AI in her writing process. She said that whenever she writes something, she generates a first draft with AI, and then makes sure that whatever she writes does not sound like that.

The next time you consider turning to a LLM, think: which idea space do I want to draw from? Do I want to draw from the hivemind, which could be useful for a task with a verifiable answer like coding or math, or do I want to draw from my lived experience, which would steer my creation in a genuinely interesting way? While it’s tempting to take the easy way out with one-click first drafts, the latter builds your confidence as a creative in the long-run. Otherwise we’ll all end up writing stories about Maya Chen.

Nice perspective. I think a lot of power users of these LLMs have figured out how to start from a close approximation of their own distribution via prompting + context engineering and going from there. Ah! first I have heard of the term "inter-model homogeneity", but makes sense, you can see the same with diffusion models of the same size/generation, you get close to similar outputs for the same text prompts across models.